- Methodology article

- Open access

- Published:

Power and sample size estimation in microarray studies

BMC Bioinformatics volume 11, Article number: 48 (2010)

Abstract

Background

Before conducting a microarray experiment, one important issue that needs to be determined is the number of arrays required in order to have adequate power to identify differentially expressed genes. This paper discusses some crucial issues in the problem formulation, parameter specifications, and approaches that are commonly proposed for sample size estimation in microarray experiments. Common methods for sample size estimation are formulated as the minimum sample size necessary to achieve a specified sensitivity (proportion of detected truly differentially expressed genes) on average at a specified false discovery rate (FDR) level and specified expected proportion (π1) of the true differentially expression genes in the array. Unfortunately, the probability of detecting the specified sensitivity in such a formulation can be low. We formulate the sample size problem as the number of arrays needed to achieve a specified sensitivity with 95% probability at the specified significance level. A permutation method using a small pilot dataset to estimate sample size is proposed. This method accounts for correlation and effect size heterogeneity among genes.

Results

A sample size estimate based on the common formulation, to achieve the desired sensitivity on average, can be calculated using a univariate method without taking the correlation among genes into consideration. This formulation of sample size problem is inadequate because the probability of detecting the specified sensitivity can be lower than 50%. On the other hand, the needed sample size calculated by the proposed permutation method will ensure detecting at least the desired sensitivity with 95% probability. The method is shown to perform well for a real example dataset using a small pilot dataset with 4-6 samples per group.

Conclusions

We recommend that the sample size problem should be formulated to detect a specified proportion of differentially expressed genes with 95% probability. This formulation ensures finding the desired proportion of true positives with high probability. The proposed permutation method takes the correlation structure and effect size heterogeneity into consideration and works well using only a small pilot dataset.

Background

DNA microarray technology provides tools for studying the expression profiles of hundreds or thousands of distinct genes simultaneously. A fundamental goal in microarray studies is to identify a subset of genes that are differentially expressed under experimental conditions of interest. Before conducting a microarray experiment, one important issue that needs to be determined is the number of arrays (replicates) required in order to have adequate power to identify differentially expressed genes.

Many sample size estimation methods have been developed for various Type I error specifications, such as family-wise error rate (FWE) [1–3], false discovery rate (FDR) [4–8], and the number of false positives [7, 9]. The sample size for a microarray study is commonly calculated as the number of arrays needed to achieve the specified power on average (e.g., [3–6, 9, 10]). The power, the proportion of truly differentially expressed genes expected to be detected, is known as the sensitivity. With the sample size estimate that is calculated to achieve a specified sensitivity on average, the proportion of truly differentially expressed genes detected would frequently be less than the average. Consequently, the sample size calculated tends to give an over-optimistic outcome. Alternatively, Wang and Chen [2], Tsai et al. [7] and Shao and Tseng [8] proposed an alternative formulation: the sample size is calculated to ensure detecting at least the specified sensitivity level with a specified probability. This will be referred to as the (confidence) probability formulation.

When the sample size problem is formulated to achieve the specified sensitivity on average, we will show that the needed sample size can be simply calculated using the univariate sample size formula without considering dependency among genes. On the other hand, if the problem is formulated to achieve a specified sensitivity with a specified probability, then it requires estimating a percentile of the distribution of sensitivity. In this case, the dependency among genes needs to be taken into consideration. Tsai et al. [7] presented an approach for controlling the comparison-wise error rate (CWER) under the model of independent or equi-correlated normal distribution with a constant power for all genes. Shao and Tseng [8] proposed a model-free procedure to estimate a general correlation matrix under the normal distribution. They used a dataset of 72 samples to illustrate an estimation of the correlation matrix. However, the size of pilot data is often small, 10 or fewer per group, and the estimated variances of the true positives are often negative (zero) resulting in poor estimate of sample size in our simulation study. Tibshirani [10] proposed a permutation method to estimate the FDR and average sensitivity for assessing a specific sample size. Tibshirani's method requires only a small number of pilot datasets and is completely model-free in the sense that no assumptions on the distribution, effect sizes, and correlations of the test statistics are required. However, the standard deviation estimate (standard error) of a test statistic depends on the sample size. A test statistic from a small sample size will have a larger variation than that from a larger sample size. Since the sample size of a pilot dataset is often small, the cutoff level based on a small pilot dataset often exceeds the true cutoff for needed samples and results in over-estimation of the needed sample size.

This paper presents an overview of the power and parameter specifications, and proposes a permutation procedure for sample size determination under the probability formulation ([2, 7, 8]). The approach of Tibshirani [10] is improved to attain a more correct permutation distribution by incorporation of an adjustment factor. The proposed method uses a small pilot dataset of 4 to 6 samples per group; the method requires fewer samples than the Tibshirani [10] method when the sample size for the pilot dataset is small relative to the needed sample size. When the sample size for the pilot dataset is large, the proposed method and the Tibshirani [10] method are equivalent.

Methods

Let m denote the number of genes studied in an array of which m0 and m1 are the numbers of non-differentially and differentially expressed genes, respectively. Given the significance level α (per comparison-wise error rate), the results of m tests can be summarized as a 2 × 2 table (Table 1).

V/m0 is the proportion of genes not differentially expressed that are declared significant, its expectation is the per comparison-wise error rate E(V)/m0 = α. V/R is the proportion of declared significant genes among the total number of significances declared that are, in fact, not differentially expressed. Its expectation is the false discovery rate E(V/R) = q, given R > 0. U/m1 is the proportion of truly differentially expressed genes that are correctly declared. In a diagnosis problem, this proportion is often referred as the true positive rate, or the sensitivity. By taking expectation, we have the "average sensitivity", E(U)/m1, denoted by λ.

Sample Size Estimation

In sample size estimation, m, m1, and the (standardized) effect size δ= (δ1,..., δm 1) for the differentially expressed genes are pre-specified by the investigator. Estimation of sample size needed to achieve the specified sensitivity λ0, on average, is straightforward. Since m1 and λ0 are pre-specified, given a FDR level q* the corresponding significance level for per comparison-wise error rate α can easily be calculated. Setting α = [m1λ0q*]/[m0 (1-q*)], the FDR will be controlled at q* for sufficiently large m1 and m0.

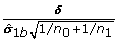

If δ i = δ0 is constant for all i, then the comparison-wise power (1 - β) of the univariate test is the same and exactly equal to λ0. Given α, δ0, and (1 - β) = λ0, the sample size can be based on the univariate sample size calculation and is given as

where t

α

and t

β

are the percentiles of a t-distribution. If δ

i

's are different, then β

i

= t-1 ( ) from Equation (1). The sample size n* can be calculated from the following equation

) from Equation (1). The sample size n* can be calculated from the following equation

The needed sample size is n = ⌈n*⌉, where ⌈n*⌉ is the smallest integer greater than or equal to n*. Given the sample size n as calculated, the outcome of a univariate test on a truly differentially expressed gene can be modeled by a Bernoulli random variable with the success probability at least (1 - β i ) since n ≥ n*. The expected number of true detections is at least m1λ0, regardless of the correlation structure among genes and hence the desired sensitivity can be achieved on average. Most sample size estimation methods are either based on this approach or extensions [3–6, 9–11]. However, the sample size calculated under this formulation is inadequate; a simple demonstration under an independent model is shown below.

Given m, π1 (= m1/m), a constant effect size δ i = δ0, q*, λ0, and the calculated sample size n (based on Equation 1), under the independent model, the total number of truly differentially expressed genes detected U is a binomial random variable with success probability (1 - β) (≥ λ0 since n ≥ n*). The probability ϕλ 0of identifying at least λ0 fraction of m1 differentially expressed genes can be calculated as the sum of the binomial probabilities [2, 7]:

The method of using Equation (1) to estimate sample size is referred to as the univariate method. Column 3-5 of Table 2 show the estimated sample size n, the average sensitivity λ and the probability ϕλ 0at λ0 = 0.6, 0.7, 0.8, 0.9. The parameters used in the calculation are: m = 2,000, π1 = 5%, 10%, 20%, δ0 = 2 and q* = 0.05. It can be seen that the probability ϕλ 0can be less than 60%. That is, using this formulation to calculate needed arrays may result in that an experiment will have the sensitivity less than the specified λ0 level with more than 40% probability.

Alternatively, Wang and Chen [2] formulated the problem as: the number of arrays needed to achieve the specified sensitivity λ0 with a probability ϕλ 0. In this formulation both λ0 and ϕλ 0need to be specified and not necessarily equal. The ϕλ 0is set at 95% since it is consistent with the common statistical practice of using the 95% confidence probability. Under this formulation, for specified λ0 the needed number of arrays is calculated so that the average sensitivity is greater than λ0 and the 5th percentile, λ5, of the distribution of the sensitivity U/m1 is greater than λ0:

In the independent and constant effect size model, Tsai et al. [7] used Equations (1) and (3) to estimate the needed sample size which is referred to as the Binomial method. Columns 6-8 of Table 2 show the estimated sample size n, the average sensitivity λ, and the probability ϕλ 0for λ0 = 0.6, 0.7, 0.8, 0.9. The probabilities in Column 8 are all higher than 95% due to n ≥ n*. The procedure will ensure detecting the specified proportion of differentially expressed genes with at least 95% probability.

In Table 2, the theoretical results indicate that the two methods give quite close sample size estimates. The difference of the estimates reflects the difference of the two formulations; when δ0 = 2, the difference is up to 1. For a given sensitivity, the needed sample size increases as the effect size δ0 decreasing, and the difference of the two formulations in the estimates is larger. We calculated the sample sizes using the same parameters as Table 2 for δ0 = 1. The sample size differences increase at about four times those of Table 2 (data not shown).

Permutation Method for Sample Size Estimation

Tibshirani [10] proposed a permutation method to account for both dependency and unequal effect sizes among genes using a pilot dataset for assessing sample size. This method is applied here to estimate the required sample size. Because the sample size of the pilot data is typically smaller than the needed sample size, the null distributions generated from the pilot data have more variations; simply using the null distributions generated from a small pilot dataset can overestimate the needed sample size. A procedure modified from the Tibshirani [10] method with adequate adjustment for sample size estimation is proposed below.

For simplicity, assume an equal sample size in each group, denoted as n = n0 = n1. Start with some pilot data with at least 4 samples per group, denoted as n0p and n1p for the control and treatment group, respectively. For specified m, m1, δ= (δ1, ..., δm 1), q*, and λ0, the algorithm for a two sample t-test is described as follows.

Algorithm: Sample Size Estimation (See additional file 1 for a software application)

-

1.

Set α = [m 1 λ 0 q*]/[m 0(1 - q*)], use the method of Tsai et al. [7] (Column 6 of Table 2) to find the needed sample size as the initial sample size n.

-

2.

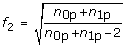

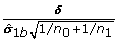

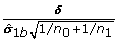

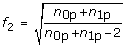

Compute the adjustment factor f = f 1 f 2 where

,

,  , and t df, pis the p th percentile of a t-distribution with df degrees of freedom.

, and t df, pis the p th percentile of a t-distribution with df degrees of freedom. -

3.

Generate the b-th permutation samples.

-

4.

Compute the t-statistics and sample standard deviations for the permutation samples for all genes.

-

5.

Multiply each t-statistic by the factor f and add

to a set of randomly selected m 1 t-statistic of differentially expressed genes to generate the permutation t-statistics s

b

= {s 0b, s 1b}, where s 0bis the set for the non-differentially expressed genes, and s 1bis the set for the differential expressed genes such that s 0b= f t 0band s 1b= f t 1b+

to a set of randomly selected m 1 t-statistic of differentially expressed genes to generate the permutation t-statistics s

b

= {s 0b, s 1b}, where s 0bis the set for the non-differentially expressed genes, and s 1bis the set for the differential expressed genes such that s 0b= f t 0band s 1b= f t 1b+  , where t 0band t 1bare the vectors of the t-statistic, δ is a vector of the effect size and

, where t 0band t 1bare the vectors of the t-statistic, δ is a vector of the effect size and  is the vector of the sample standard deviation.

is the vector of the sample standard deviation. -

6.

Store the permutation statistics s b .

-

7.

Repeat 3-6 for all possible permutations, b = 1, 2, ..., N, where N = (n 0p+n 1p) C n 0p

-

8.

Construct the null distribution by pooling all permutation statistics from the set of non-differentially expressed genes s 0 = {s 01, s 02, ..., s 0N}. Find the 100×(α/2)th and 100×(1 - α/2)th percentiles as the critical values.

-

9.

Compute the number of significances for the true positives u b for each statistic in s 1bfor each permutation sample b = 1, 2, ..., N.

-

10.

Order u 1, u 2, ..., u N , and find the 5th percentile, denoted by u*.

-

11.

Compare u* to m 1 λ 0. If u* ≥ m 1 λ 0, stop and report n as the sample size estimate; otherwise, increase n by 1 and go to 2.

In the proposed algorithm, the permutation t-statistics of non-differentially expressed genes from all possible permutations were pooled to estimate the null distribution of the test statistics (Step 8). The number of true positives (U) was estimated for each permutation sample (Step 9) since the set of differentially expressed genes in each permutation sample were known. The distribution of the number of true positives U and its 5th percentile u* were estimated (Step 10). To reduce the excess variation of the permutation distribution, the proposed method includes the adjustment factor: f = f1f2. The adjustment factor consists of two scale factors: f1 and f2. The first factor, f1, accounts for differential sample sizes between the pilot study and the planned study and the second scale factor, f2, uses the maximum likelihood estimate of the t-statistic [12]. When the sample size of pilot data is large, both factors f1 and f2 converge to 1 and the proposed and the Tibshirani [10] methods are equivalent. (Note that Tibshirani's method was proposed based on the average formulation.) Since the permutation technique is used to estimate the critical value and the distribution of the sensitivity, no assumptions on the distribution of the t-statistic and the dependency among the statistics are made. Furthermore, the proposed method does not need to estimate the covariance matrix among all genes which can result in computation difficulty when the sample size of the pilot dataset is small.

Results

Two simulation analyses were conducted to evaluate the two formulations of sample size estimation described above. The first analysis evaluated the two formulations under the independent and constant effect size model. The theoretical results for the two formulations are shown in Table 2. The simulation analysis provides an empirical validation. The second analysis evaluated the four methods under a correlated model: 1) the univariate method (e.g., Jung [4]); 2) the Shao and Tseng [8] model-free method, 3) the Tibshirani [10] permutation method; and 4) the proposed permutation method. The univariate method is designed for the average formulation, while the three other methods are considered with 95% probability with a use of a pilot dataset. The same model parameters in Table 2 were used in the evaluation. The Type I error rate was based on setting the FDR at q* = 0.05. Note that there are many multiple testing FDR procedures with different strategies. For example, the Storey's FDR procedure [13] involved an estimation of the number of non-differentially expressed genes m0. However, to minimize the confounding effect brought by the variation in estimating m0, we simply used the true m0 in our simulation analysis. Sample sizes were calculated for the given parameter values. The empirical estimates of the FDR, average sensitivity λ and the probability ϕλ 0were then calculated and evaluated. Using the true m0 provides a direct validation of the proposed procedure with control of the FDR.

The purpose of the first simulation study was to validate the theoretical results of the sample size, sensitivity, and 95% probability for the two methods shown in Table 2 under the independent model. We generated 1,000 simulation samples with sample sizes per group from the Column 3 or Column 6 of Table 2. For the null model, m0 = m × (1 - π1) genes were generated from the independent standard normal N(0,1); for the alternative model, m1 = m × π1 genes were generated based on independent normal N(δ0, 1). For each simulation sample set, the t-statistics and the correspondent p-values were computed, and the numbers of false positives and true positives at the FDR level q* = 0.05 were recorded. The empirical estimates of the FDR, average sensitivity λ and probability ϕλ 0were then calculated. The estimate of ϕλ 0was the proportion of times out of the 1,000 simulations that the number of true positives was not less than m1 × λ0.

Table 3 shows the empirical results for the two methods. The empirical FDR appears close to the nominal levels in both approaches. For the univariate method, the empirical average sensitivity λ's are all at or above the desired levels, except for π1 = 0.05 and λ0 = 70%. The probability ϕλ 0is less than 50%, for π1 = 0.05 and λ0 = 70%. For the binomial method, the empirical average sensitivities λ's are all greater than the specified levels. Most of probabilities ϕλ 0's exceed 95% except for π1 = 0.05, λ0 = 60%, π1 = 0.10, λ0 = 90% and π1 = 0.20, λ0 = 70%. The empirical results of Table 3 are generally consistent with the theoretical values shown in Table 2. That is, the sample size calculated using the univariate method generally will achieve the specified sensitivity on average; however, the probability to achieve the specified sensitivity can be lower than 50%.

For comparison purposes, the mean and standard deviation of the sample size estimates from the proposed permutation method using a pilot dataset of group size 4 are also provided in the last column of Table 3. The pilot data were randomly generated from the normal distribution in each simulation. The proposed method tends to over-estimate the needed sample size by up to five arrays.

The second analysis was to evaluate the four methods, the univariate method (Jung [4]), Shao and Tseng [8], Tibshirani [10], and proposed permutation methods, under a correlated model using the well known colon cancer dataset [14]. The colon cancer dataset [14] consists of 22 normal and 40 colon tumor tissue samples with 2,000 genes. The analysis consisted of two steps. The first step evaluated the sample size estimates obtained by the three 95% probability formulation methods based on a pilot dataset of sample size 4 and 6 per group. The second step compared the sample sizes estimated by the proposed method from the first step with the estimates from the univariate method.

In the first step, 4 samples from the colon dataset were randomly selected without replacement from each group to form a pilot dataset. The algorithm described above was used to estimate the sample size for the proposed method and the Tibshirani [10] method. For example, for π1 = 5%, q* = 0.05 and λ0 = 90%, the initial sample size was n = 13 (Column 6 of Table 2) and α = 0.00249. A constant effect size δ

i

= δ0 = 2 was considered. For the proposed permutation method, the initial adjustment factors for f were f1 = 0.6777 and f2 =  = 1.155, while no adjustment was taken for the Tibshirani [10] method. For the Shao and Tseng [8] model-free method, a correlation matrix of t-statistics was estimated by using all possible permutation datasets from the pilot dataset. However, the Shao and Tseng [8] model-free method was found to have computational difficulty in most cases. Details are given later.

= 1.155, while no adjustment was taken for the Tibshirani [10] method. For the Shao and Tseng [8] model-free method, a correlation matrix of t-statistics was estimated by using all possible permutation datasets from the pilot dataset. However, the Shao and Tseng [8] model-free method was found to have computational difficulty in most cases. Details are given later.

The procedure was repeated 1,000 times to select different pilot datasets of size 4 from each group to account for the variation of pilot dataset. The means and standard deviations of the sample size estimates from the Tibshirani [10] and proposed methods were calculated and are shown in Columns 4 and 5 of Table 4. The univariate method is considered as the standard method, and the estimates are listed in Column 3. The needed sample size estimated from either the Tibshirani [10] or the proposed method is greater than that from the univariate method in each case. The difference between the univariate method and the proposed method is less than 5 arrays per group in each case. The mean and standard deviation estimates from the Tibshirani [10] method are much larger than the estimates from the proposed method. The difference increases as λ0 increases or π1 decreases. Note that, under the independent model, the sample size and standard deviation estimates from the proposed method are smaller (Table 3).

The procedure was repeated with 6 samples for the initial pilot dataset. The estimates are shown in Columns 6 and 7. The proposed procedure gives consistent results from the two pilot sample sizes; however, the results from the Tibshirani [10] method differ substantially. The Tibshirani approach does not adequately take the pilot sample size into consideration. When the pilot sample size is much smaller than the needed sample size, the overestimation of the sample size by Tibshirani [10] method becomes severe. As the pilot study size getting closer to the needed sample size, the Tibshirani [10] and the proposed methods will give similar results.

In our simulations, the Algorithm B in Shao and Tseng [8] couldn't successfully produce solutions for the pilot data of group size 4 in all 1,000 replications. When the group size increases to 6, the algorithm works only when π1 = 20%, λ0 = 60% and 70%; the mean (standard deviation) of the sample size estimates are 6.4(0.012) and 6.8(0.012), respectively. The estimated values appear too small to be correct. This method does not appear to be applicable for small pilot sample sizes. Using the entire colon cancer dataset [14] of 62 samples, the sample size estimates are shown in Column 8. The estimates generally need one or two more arrays than the univariate methods, but fewer than the proposed method. Since the Tibshirani [10] method gave larger estimates and the Shao and Tseng [8] gave smaller estimates than the proposed method. In the second step of analysis, the univariate method and the proposed method were evaluated.

Comparison of the performance of the two methods is similar to that shown in Table 3. The data were sampled without replacement from the colon cancer dataset, instead of from the normal random variables under the independent model. The sample sizes were based on Column 3 or Column 4 of Table 4. The data were then randomly permuted to remove the difference between two groups, and a common effect size δ0 = 2 was added to a set of randomly selected m1 genes in the tumor group. For each re-sampled data set, the permutation test was used to generate a p-value and the numbers of false positives and true positives were computed using q* = 0.05. The number of repetitions to compute the permutation test was 10,000. The empirical estimates of FDR, λ and ϕλ 0were computed. The entire procedure was repeated 1,000 times.

Table 5 shows the empirical estimates of q*, λ, and ϕλ 0for the two methods. Both methods are shown to control the FDR well and achieve the desired sensitivity. Thus the two methods can be expected to have satisfactory performance in practice. However, for the univariate method, the empirical ϕλ 0estimates are between 55% and 75%, except one at 80%. One would have to take a risk that the sensitivity can fall below the specified level.

The effect size of δ0 = 2 (Table 4) was used to validate the proposed permutation method under a correlated model using the colon cancer dataset [14]. In practice, the effect sizes can be much smaller. We calculated the sample sizes using the same parameters as Table 4 with an effect size δ0 = 1 for two pilot sample sizes 4 and 6. The sample size estimates are shown in Table 6. The proposed procedure gives similar results for the two pilot sample sizes, which are consistent with the results for δ0 = 2 in Table 4. The difference between the univariate method and the proposed method is about 15 arrays per group. The Tibshirani [10] method would require up to 67 and 35 extra arrays per group for 4 and 6 pilot samples, respectively. The estimates for the Shao and Tseng [8] method could be estimated only when the pilot study size is around or larger then the needed sample size.

Discussion and Conclusions

Determination of the needed sample size before conducting a microarray experiment is an important issue. The sample size problem is commonly formulated as the number of arrays needed to achieve the specified sensitivity λ on average. This paper demonstrates that the calculated sample size under this formulation may have the sensitivity λ at the specified level on average, but, the probability ϕ λ that the specified sensitivity is achieved can be low (less than 50%) due to the variance in sensitivity distributions. Furthermore, under this formulation this paper shows that the sample size can be calculated by a univariate method, regardless of the correlation structure among the gene expression levels; the procedures to account for correlations, such as Li et al. [6], are not needed (Table 5). These findings agree with the results reported by Jung [4] and Dobbin and Simon [11]. However, this paper provides a theoretical interpretation for this approach.

Under the confidence probability formulation, consideration of the dependency among gene expressions is necessary in estimating the sample size since the percentile of the sensitivity distributions not only depends on the effect size of individual genes but also on their correlations. We propose a permutation method based on the method proposed by Tibshirani [10], but with an inclusion of an adjustment factor and a requirement to achieve a specific sensitivity with 95% probability. The adjustment factor provides more accurate estimates of the power and sample size. Shao and Tseng [8] also formulated the needed sample size in terms of confidence probability. Under the normality assumption, Shao and Tseng [8] proposed algorithms for mild correlations among genes using a preliminary dataset. They showed that their approach worked well for an example dataset of 72 samples. However, using their Algorithm B in our simulation for the colon dataset (the average correlation for the colon dataset is about 0.4), the estimated variance of the true positives can be negative when the preliminary sample size is 4 or 6. Their procedure does not perform well for a small pilot dataset with small sample size. In practice, sample sizes of pilot data are often small. Our simulation studies show that our procedure can work well with 4 to 6 samples per group. However, our procedure seems to over-estimate the needed sample size when the correlations are very small, especially with small effect sizes. In this situation, our simulation results indicate that the factor f2 may not be necessary (data not shown).

The choice of a particular multiple testing procedure used for data analysis can affect the error rate and power in the sample size estimation. Using a conservative procedure in the data analysis may decrease the "power" of the study; sometimes, the calculated sample size may have sensitivity below the specified level. For example, in this paper the calculation is based on the true number of non-differentially genes m0. However, if the data analysis uses an overestimated m0 such as the Benjamini and Hochberg procedure [15], then the power may be below the desired level. An alternative is to use the total number of genes m instead of the number of non-differentially genes m0 to estimate the sample size. This procedure is expected to generate an appropriate sample size to achieve the desired sensitivity with a specified probability, regardless of which multiple testing procedure is used for data analysis.

References

Yang MCK, Yang JJ, McIndoe RA, et al.: Microarray experimental design: power and sample size considerations. Physiol Genomics 2003, 16: 24–28. 10.1152/physiolgenomics.00037.2003

Wang SJ, Chen JJ: Sample size for identifying differentially expressed genes in microarray experiments. J Comput Biol 2004, 11: 714–726. 10.1089/cmb.2004.11.714

Jung S-H, Bang H, Young S: Sample size calculation for multiple testing in microarray data analysis. Biostatistics 2005, 6: 157–169. 10.1093/biostatistics/kxh026

Jung S-H: Sample size for FDR-control in microarray data analysis. Bioinformatics 2005, 21: S3097–3104. 10.1093/bioinformatics/bti456

Pounds S, Cheng C: Sample size determination for the false discovery rate. Bioinformatics 2005, 21: 4263–4267. 10.1093/bioinformatics/bti699

Li SS, Bigler J, Lampe JW, Potter JD, Feng Z: FDR-controlling testing procedures and sample size determination for microarrays. Statist Med 2005, 24: 2267–2280. 10.1002/sim.2119

Tsai C-A, Wang S-J, Chen D-T, et al.: Sample size for gene expression microarray experiments. Bioinformatics 2005, 21: 1502–1508. 10.1093/bioinformatics/bti162

Shao Y, Tseng C-H: Sample size calculation with dependence adjustment for FDR-control in microarray studies. Statist Med 2007, 26: 4219–4237. 10.1002/sim.2862

Lee M-L, Whitmore G: Power and sample size for DNA microarray studies. Statist Med 2002, 21: 3543–70. 10.1002/sim.1335

Tibshirani R: A simple method for assessing sample sizes in microarray experiments. BMC Bioinformatics 2006, 7: 106. 10.1186/1471-2105-7-106

Dobbin K, Simon R: Sample size determination in microarray experiments for class comparison and prognostic classification. Biostatistics 2005, 6: 27–38. 10.1093/biostatistics/kxh015

Hedges LV, Olkin I: Statistical Methods for Meta-Analysis. Academic Press; 1985.

Storey JD: A direct approach to false discovery rates. Journal of the Royal Statistical Society, Series B 2002, 64: 479–498. 10.1111/1467-9868.00346

Alon U, Barkai N, Notterman DA, et al.: Broad patterns of gene expression revealed by clustering analysis of tumor and normal colon tissues probed by oligonucleotide arrays. Proc Natl Acad Sci 1999, 96: 6745–6750. 10.1073/pnas.96.12.6745

Benjamini Y, Hochberg y: Controlling the false discovery rate: a practical and powerful approach to multiple testing. Journal of the Royal Statistical Society, Series B 1995, 57: 289–300.

Acknowledgements

Huey-Miin Hsueh's research was done while visiting the NCTR. The authors are very grateful to reviewers for much helpful comments and suggestions for revising and improving this paper. The views presented in this paper are those of the authors and do not necessarily represent those of the U.S. Food and Drug Administration

Author information

Authors and Affiliations

Corresponding author

Additional information

Authors' contributions

JJC conceived the study and wrote the manuscript. JJC and WJL developed the methodology and proved theoretical results. WJL implemented the algorithms. HMH improved the concepts of the average and 95% confidence probability formulations. JJC, HMH and WJL performed the analysis. All authors read and approved the final manuscript.

Electronic supplementary material

12859_2009_3505_MOESM1_ESM.TXT

Additional file 1: The software for the algorithm of the proposed method. It provides software and an example for the algorithm of the proposed method. (TXT 3 KB)

Rights and permissions

Open Access This article is published under license to BioMed Central Ltd. This is an Open Access article is distributed under the terms of the Creative Commons Attribution License ( https://creativecommons.org/licenses/by/2.0 ), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Lin, WJ., Hsueh, HM. & Chen, J.J. Power and sample size estimation in microarray studies. BMC Bioinformatics 11, 48 (2010). https://doi.org/10.1186/1471-2105-11-48

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1471-2105-11-48

,

,  , and t df, pis the p th percentile of a t-distribution with df degrees of freedom.

, and t df, pis the p th percentile of a t-distribution with df degrees of freedom. to a set of randomly selected m 1 t-statistic of differentially expressed genes to generate the permutation t-statistics s

b

= {s 0b, s 1b}, where s 0bis the set for the non-differentially expressed genes, and s 1bis the set for the differential expressed genes such that s 0b= f t 0band s 1b= f t 1b+

to a set of randomly selected m 1 t-statistic of differentially expressed genes to generate the permutation t-statistics s

b

= {s 0b, s 1b}, where s 0bis the set for the non-differentially expressed genes, and s 1bis the set for the differential expressed genes such that s 0b= f t 0band s 1b= f t 1b+  is the vector of the sample standard deviation.

is the vector of the sample standard deviation.