- Proceedings

- Open access

- Published:

A method for computing the overall statistical significance of a treatment effect among a group of genes

BMC Bioinformatics volume 7, Article number: S11 (2006)

Abstract

Background

In studies that use DNA arrays to assess changes in gene expression, our goal is to evaluate the statistical significance of treatments on sets of genes. Genes can be grouped by a molecular function, a biological process, or a cellular component, e.g., gene ontology (GO) terms. The meaning of an affected GO group is often clearer than interpretations arising from a list of the statistically significant genes.

Results

Computer simulations demonstrated that correlations among genes invalidate many statistical methods that are commonly used to assign significance to GO terms. Ignoring these correlations overstates the statistical significance. Meta-analysis methods for combining p-values were modified to adjust for correlation. One of these methods is elaborated in the context of a comparison between two treatments. The form of the correlation adjustment depends upon the alternative hypothesis.

Conclusion

Reliable corrections for the effect of correlations among genes on the significance level of a GO term can be constructed for an alternative hypothesis where all transcripts in the GO term increase (decrease) in response to treatment. For general alternatives, which allow some transcripts to increase and others to decrease, the bias of naïve significance calculations can be greatly decreased although not eliminated.

Introduction

The purpose of this work is to evaluate the statistical significance of treatments on the expressions in subsets of the genes on an array; for example, sets defined by gene ontology terms (GO terms, http://www.geneontology.org). GO terms group genes according to a biological process, molecular function, or cellular component. Inferences about the impact of a treatment are usually more straightforward when based on GO terms or equivalent groupings as opposed to lists of significant genes. Hence, we want to assess the statistical significance of the treatments on the group.

mRNA levels measured among genes within a GO group will be correlated. Correlations among genes involved in a common biological task are likely, and correlations are also expected simply because the set of expressions are measured within an animal and array, i.e., under shared conditions. The p-values computed in many packages[1, 2] assume independence and therefore could be misleading.

The statistical significance of a GO group is commonly assessed by counting the number of statistically significant genes in the group. The null hypothesis that this count is a random sample of the significant genes on the array is tested versus an alternative hypothesis that the count is enriched [1–3]. The test is Fisher's exact test (probabilities computed using a hypergeometric distribution) or one of its many approximations (e.g., chi-squared test which approximates the hypergeometric distribution with a binomial distribution) [4].

We do not test for enrichment primarily because the null distribution depends upon effects among interrogated genes that are unrelated to genes in the evaluated GO term. Put another way, the significance of a GO term should not depend upon whether or not other genes on the array (not in GO term) were affected by the treatments. This approach is not amenable to corrections for correlations among the p-values, since the test inherently assumes exchangeability among genes; an assumption which is not met under arbitrary correlation structures. Furthermore, the usual implementation simply counts 'significant' genes which precludes extracting supporting evidence from the 'not significant' genes.

The distribution of p-values for the individual genes on the array allows one to estimate the number of affected genes, and this estimate typically is larger than any list of 'significant' genes, which can be compiled with an acceptably low rate of misclassification [5–7]. Conceptually, methods that collate individual p-values within biologically meaningful groups can extract any supporting evidence for treatment effects from group members that individually cannot be identified as affected by the treatments.

We prefer an approach that is based on a p-value having a uniform distribution under the null hypothesis [8]. For any continuous probability distribution, x = F-1(1-p) transforms p to the distribution specified by F. Two choices for F, which are in common use for combing p-values, are the standard normal distribution, Φ(x), and the chi-square distribution with 2 degrees of freedom, F(x) = 1 - exp(-x/2). When p-values are independent, the distribution of the sum is straightforward for either normal or chi-squared deviates, and serves as a basis for testing the significance of a GO term. This is elaborated for the normal distribution in the next section, where we also develop a correction for correlations. The test based on the chi-squared deviate can also be corrected for correlation at least in a 'one-sided' case [9–12].

Randomization tests can deal with correlations among the genes in a GO term, and, when applicable, they should generate uniformly distributed null p-values [13–15]. Our studies usually process samples in batches and the estimation of treatment differences is essentially done within batches [6, 16–18]. Such analyses usually cannot be implemented as randomization tests and our motivation to develop the presented methods is largely in this context. We will present adjustments for a one-sample t-test because the results are transferable to pair-wise contrasts in a fixed-effects linear model. While the pair-wise contrasts are our real concern, their presentation carries algebraic baggage that is largely irrelevant to correcting for correlation and we have opted for streamlined notation, which focused on the fundamental issues in correcting for correlation. A one-sample t-test can also be implemented as a randomization test, which suggests presenting it as a competitor. The randomization test is constructed to have a uniform distribution under the null although for sample sizes as small as n = 5 the number of possible permutations may be a complicating factor. When both methods are applicable, we would not choose between them based upon their abilities under the null distribution but rather on a consideration of their power and/or robustness, which is well beyond the scope of this paper.

We will assume that the data are n observations coming from a population with mean vector, Δ and covariance matrix, Σ. Although simple, this model is directly applicable to some of our studies, and it serves here to focus on basic issues with relatively simple mathematics. For an example where this model can be used directly, see the test for gender differences in gene expression as estimated in Delongchamp et al. (2005)[16].

Methods

Meta-analyses

Several methods are used in meta-analyses to combine a set of p-values into an overall significance level. Under a null hypothesis, the p-value for a corresponding statistic is a random variable with a uniform distribution and it can be transformed to a convenient probability distribution [8]. Here, we use the inverse of the standard normal distribution. Then

z i = Φ-1(1 - p i )

is a random variable from the standard normal distribution, and when the set of p-values, {p i : i = 1,...,m}, are also independent, the statistic,

where m 11 = (1,1,...,1)', also has a standard normal distribution. So, the p-value,

gives an overall significance level for the set. We refer to this as the naïve estimate because it naively assumes that covariance of z is the identity matrix, cov(z) = I.

Adjusting for correlation

In studies which use DNA arrays, the expressions measured on an array will be correlated and these correlations imply that the set of p-values, {p i : i = 1,...,m}, are not independent. If the covariance of z is known, it is straightforward to modify the statistic so that it accommodates correlations. Suppose that cov(z) = R, then the variance of 1'z is 1'R1 and the appropriate p-value is

Note that the naïve estimator takes R = I implying no correlations among the m p-values.

When R is unknown, it must be estimated. In this context, it is useful to 'adjust' the variance of the naïve estimator. Let  be the average value of the off-diagonal elements of R, i.e.,

be the average value of the off-diagonal elements of R, i.e.,  = (1'R1 - m)/(m(m - 1)), then the implied adjustment is

= (1'R1 - m)/(m(m - 1)), then the implied adjustment is

That is,

The correction for Ponly depends on the average correlation,  , and not on the individual correlations. We believe that this allows one to generate an acceptable estimate in small data sets even though the individual correlations will be poorly estimated.

, and not on the individual correlations. We believe that this allows one to generate an acceptable estimate in small data sets even though the individual correlations will be poorly estimated.

A couple of insights can be drawn from Equation (1). Since the adjustment is less than 1 when

> 0; a significance level based upon the naïve version is too small. Further, the need for adjustment increases with the size of the subset, i.e., m.

Estimating a correction for correlation

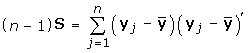

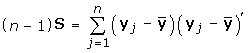

Continuing with the data model described in the Introduction, the mean of n observations is assumed to have a multivariate normal distribution with mean vector, Δ, and covariance matrix,  ; that is,

; that is,

Let

-

S estimate Σ;

-

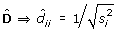

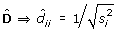

D be a diagonal matrix;

; estimated by

; estimated by

The element-wise t-statistic for the null hypothesis, Δ = 0, can be written in vectorial form as

Since

is a consistent estimate of D, the t-test approximates the z-test for large n, i.e.,  as n increases. The cov(z) = DΣD, which is estimated by

as n increases. The cov(z) = DΣD, which is estimated by  S

S .

.

Then for a one-sided p-value with an increasing alternative, t → z implies that the appropriate correlation matrix in Equation 1 is R = DΣD, which is approximated by  =

=  Σ

Σ . Note that for a decreasing alternative, the same correlation applies for z = Φ-1 (p).

. Note that for a decreasing alternative, the same correlation applies for z = Φ-1 (p).

t-test

The one-sided p-value for a null hypothesis on Δ is based on the distribution of the statistic,  . Let

. Let  and u

j

= ay

j

, and note that

and u

j

= ay

j

, and note that

Further, the variance of

satisfies

So

This provides an alternative way to compute Equation (1), which has some advantages. First, one only needs to know

, which is computed in the by-gene analyses. Second, the by-gene analysis applied to {u j : j = 1,...,n} computes the statistic and its p-value directly. So algorithms are simpler. Finally, the p-value is based on a t-distribution, which better reflects the effect of small samples.

Morrison [19] derives Hotelling's T2 test in the context of t-statistics of linear combinations, and presumably such statistics have a history nearly as long as multivariate statistics. O'Brien [20] examined this specific statistic as a method for comparing multiple endpoints in clinical trials. Lauter [21] noted that the null distribution is not the t-distribution with small sample sizes and explained a modified statistic, which corrects this. While the modified statistic controls the Type 1 error, the modification seems to reduce the power relative to O'Brien's statistic (simulations not reported).

One- versus two-sided tests

So far, we have been discussing the case where pi is a one-sided p-value. Essentially, Equation 1 applies whether p

i

is a p-value from a one-sided test or a two-sided test. However, the covariance of z is not the same as the covariance of its absolute value, |z|. Consequently a two-sided test in which  , needs a different adjustment than a one-sided test in which p = 1 - Φ(z).

, needs a different adjustment than a one-sided test in which p = 1 - Φ(z).

For a two-sided test, the null distribution of 1'|z| can be generated through Monte Carlo samples from the null distribution of z, MVN(0, cov(z)). That is, let z1,...,z k be pseudo-random samples from the multivariate normal distribution, MVN(0, cov(z)), and directly compute the p-value for the observed value, ψ = 1'|z|, as

where I(A) is an indicator function which gives 1 if A is true, or 0 otherwise. In simulations where Σ is specified, the adjustment  can be implemented based upon R,

can be implemented based upon R,  or I. In the two-sided case, it is also of interest to generate samples from MVN(0, cov(z)) where

or I. In the two-sided case, it is also of interest to generate samples from MVN(0, cov(z)) where  is computed from

is computed from  and

and  . In theory, adjustments based upon R correct the p-value for correlations, and for large enough n so will

. In theory, adjustments based upon R correct the p-value for correlations, and for large enough n so will  and

and  . In practice,

. In practice,  or

or  must be useful when n is quite small. Their utility in this regard can be illustrated with simulated data.

must be useful when n is quite small. Their utility in this regard can be illustrated with simulated data.

Results

Simulation

The theory outlined in the previous sections provides adjustments which will work well for large sample sizes. It does not guarantee much when sample sizes are as small as is seen in most studies that use DNA arrays. We simulated a few 'representative' cases to illustrate that P computed from the naïve statistic can be very inaccurate and to demonstrate that the adjustments proposed herein give useful corrections with small sample sizes.

The 'representative' simulations assumed a covariance matrix, Σ, for m = 20 correlated genes. We constructed Σ = D-1RD-1 by specifying R and D. The correlation matrix, R, is given in Table 1,  ≈ 0.45. These correlations were randomly selected to be between 0.35 and 0.55. Correlations in this range are commonly observed in our studies. Likewise, we randomly selected 20 variances in the range typical of our studies (Table 2). For a given sample size, n, pseudo-random samples y1,y2,...,y

n

were generated from a multivariate normal distribution, MVN(0,Σ), and P was computed using Equations 1 or 2. Since Σ is known, these computations can be based upon R,

≈ 0.45. These correlations were randomly selected to be between 0.35 and 0.55. Correlations in this range are commonly observed in our studies. Likewise, we randomly selected 20 variances in the range typical of our studies (Table 2). For a given sample size, n, pseudo-random samples y1,y2,...,y

n

were generated from a multivariate normal distribution, MVN(0,Σ), and P was computed using Equations 1 or 2. Since Σ is known, these computations can be based upon R,  ,

,  or I. This procedure was iterated at least 10,000 times to observe enough P-values to adequately estimate the empirical distribution.

or I. This procedure was iterated at least 10,000 times to observe enough P-values to adequately estimate the empirical distribution.

Figure 1 plots the cumulative distributions of P-values from a one-side test with n = 5. Ideally the null p-values follow a uniform distribution, the diagonal line in this figure. The naïve P-value (cyan line) grossly overstates the significance of the test statistic with roughly 30% of these P-values being less than 0.05. The P-values (red line) computed using Equation 1 with the true correlation, R, have the expected distribution; the observed departures from the diagonal are within the variation associated with estimating the uniform distribution by an empirical distribution (Kolmogorov-Smirnov test, p = 0.21). The corrected p-values based on the t-test method (blue line) and the estimated correlation,  (green line), are near the diagonal albeit not perfect. However, they offer a big improvement over naïve values.

(green line), are near the diagonal albeit not perfect. However, they offer a big improvement over naïve values.

Cumulative distribution of p-values for one-sided test case with sample size n = 5. The corrected p-values based on a t-distribution (blue line) and the  (green line) are near diagonal, while the naïve p-value (cyan line) overstates the significance of the test. We can correct the problem when we know the true correlation, R between the genes in a GO term (red line).

(green line) are near diagonal, while the naïve p-value (cyan line) overstates the significance of the test. We can correct the problem when we know the true correlation, R between the genes in a GO term (red line).

The corrections are expected to improve with larger sample sizes, and this is illustrated for n = 15 in Figure 2 where all of the correction methods are fairly accurate. Note also that the distribution for the naïve P-value does not respond to increasing sample size and remains very inaccurate. Figures 1 and 2 were generated from 10,000 iterations. Essentially, the empirical distributions for the corrections plotted in Figure 2 are not statistically different, and discrepancies with our expectations most likely reflect the variability among the plotted distributions. For example, we would expect the t-test version to be at least as good as the correction based on  , and this is not apparent in Figure 2.

, and this is not apparent in Figure 2.

In practice we use the t-test correction because it is easiest to compute. Figure 3 plots the interval, 0.0001 to 0.1, for the t-test correction where the empirical distributions were estimated from 1 million iterations. This figure illustrates the convergence toward the diagonal as sample sizes increase. Even at n = 5, the accuracy is acceptable for our purposes and represents a substantial improvement over the naïve calculation. For example, the nominal level of 0.05 would actually be 0.08; much closer to the nominal level than the naïve test at approximately 0.3 (Figure 1).

Cumulative distribution of p-values for t-test correction with sample size n = 5, 10, 15, 20. The convergence toward the diagonal as sample sizes increase is illustrated by the p-values in the interval, 0.0001 to 0.1, for the t-test correction where the empirical distributions were estimated from 1 million iterations.

Figure 4 and Figure 5 show the results for simulations of the two-sided case, n = 5, 15 respectively. Clearly, the naïve P-values should not be used because they are inaccurate. The empirical distributions for the other methods in these figures involve considerable computation since each P-value is generated by Monte Carlo sampling. To speed up computations, we terminated Monte Carlo sampling when  or k = 106. Consequently, small values of P are more accurately estimated than large values. In practice, we do not need to estimate large values with as much accuracy as small values so this is not a bad strategy. However, it makes it more difficult to evaluate whether the correction follows the diagonal. For small values, say < 0.1, the corrections are comparable in accuracy to those in the one-sided simulations. The correction based upon

or k = 106. Consequently, small values of P are more accurately estimated than large values. In practice, we do not need to estimate large values with as much accuracy as small values so this is not a bad strategy. However, it makes it more difficult to evaluate whether the correction follows the diagonal. For small values, say < 0.1, the corrections are comparable in accuracy to those in the one-sided simulations. The correction based upon  (blue line) seems preferable since it appears to be more consistent with the correction based upon the true correlation, R (red line).

(blue line) seems preferable since it appears to be more consistent with the correction based upon the true correlation, R (red line).

Discussion

Correlations among gene expressions within a GO term invalidate the computed p-value when it is based upon assumed independence. Such estimates overstate the significance when the correlations are positive. This behavior was demonstrated analytically through a specific statistic, Equation (1), as well as through simulations. The simulated data show that the bias of the naïve p-value can be substantial with moderate correlation.

For didactic reasons, we used a statistic and a scenario, which is mathematically tractable. However, it should be understood that overstating statistical significance is a problem for any statistic where the computed p-value for the GO term assumes independence among gene expressions. This is true for widely implemented tests which evaluate if significant genes are 'over-represented' within a GO term. In these tests, p-values are based upon the hypergeometric distribution (Fisher's Exact Test) or its binomial or chi-squared approximations, and an assumption of independence is essential to the construction of the null distribution. In addition to the presented statistic, there are other meta-analysis tests based upon a uniform distribution of null p-values. A theory-based adjustment for correlation is difficult to construct for these tests. Naïve versions are biased, but not always as severe as the estimate presented here. So, we have been pursuing corrections for them.

For the illustrated statistic, the naïve p-value is easy to correct when the correlation is known. In practice the correlation must be estimated from limited data. Under the one-sample t-test scenario, we can estimate the applicable correlation. The simulation of a 'representative' group of 20 genes shows that estimating the correlation improves upon the naïve p-value with as few as 5 samples.

In the one-sided case, a t-statistic can be computed which implicitly adjusts for the presence of correlations. This statistic is easy to implement in existing computer programs; essentially the program that computed by-gene p-values can be used. This procedure can be extended to any statistical test that can be applied to the individual genes. As presented here, the one-sided alternative specifies that all genes change in the same direction. It is trivial to apply the procedure for any pre-specified direction of change for each gene. As our knowledge of expression profiles from responses to toxicity grows, this approach might become a standard test in screening chemicals for toxicity.

In an exploratory context, it is not possible to pre-specify how individual genes will respond to treatment, and p-values must reflect the two-sided alternative. At least with the simulated data, the

method worked well to control the size of the test. Because  misrepresents the true correlation structure, we are cautious in recommending its general use and plan to simulate a broader set of scenarios.

misrepresents the true correlation structure, we are cautious in recommending its general use and plan to simulate a broader set of scenarios.

Conclusion

Reliable corrections for the effect of correlations among genes on the significance level of a GO term can be constructed for a one-sided alternative hypothesis. For general two-sided alternatives the bias of naïve significance calculations can be greatly decreased although not eliminated.

References

Tong W, Harris S, Cao X, Fang H, Shi L, Sun H, Fuscoe J, Harris A, Hong H, Xie Q, Perkins R, Casciano D: Development of public toxicogenomics software for microarray data management and analysis. Mutat Res 2004, 549: 241–253.

Khatri P, Draghici S: Ontological analysis of gene expression data: current tools, limitations, and open problems. Bioinformatics 2005, 21: 3587–3595. 10.1093/bioinformatics/bti565

Draghici S, Khatri P, Martins RP, Ostermeier GC, Krawetz SA: Global functional profiling of gene expression. Genomics 2003, 81: 98–104. 10.1016/S0888-7543(02)00021-6

Johnson NL, Kotz S: Discrete Distributions. New York: John Wiley & Sons; 1969.

Allison DB, Gadbury GL, Heo M, Fernandez JR, Lee C-K, Prolla TA, Weindruch R: A mixture model approach for the analysis of microarray gene expression data. Computational Statistics and Data Analysis 2002, 39: 1–20. 10.1016/S0167-9473(01)00046-9

Delongchamp RR, Bowyer JF, Chen J, Kodell RL: Multiple-testing strategy for analyzing cDNA array data on gene expression. Biometrics 2004, 60: 774–782. 10.1111/j.0006-341X.2004.00228.x

Schweder T, Spjotvoll E: Plots of p-values to evaluate many tests simultaneously. Biometrika 1982, 69: 493–502. 10.2307/2335984

Hedges LV, Olkin I: Statistical Method for Meta-Analysis. Academic Press; 1985.

Brown MB: A method for combining non-independent, one-sided tests of significance. Biometrics 1975, 31: 987–992. 10.2307/2529826

Kost JT, McDermott MP: Combining dependent p-values. Statistics & Probability Letters 2002, 60: 183–190. 10.1016/S0167-7152(02)00310-3

Xu X, Tian L, Wei LJ: Combining dependent tests for linkage or association across multiple phenotypic traits. Biostatistics 2003, 4: 223–229. 10.1093/biostatistics/4.2.223

Zaykin DV, Zhivotovsky LA, Westfall PH, Weir BS: Truncated product method for combining p -values. Genetic Epidemiology 2002, 22: 170–185. 10.1002/gepi.0042

Mootha VK, Lindgren CM, Eriksson KF, Subramanian A, Sihag S, Lehar J, Puigserver P, Carlsson E, Ridderstrale M, Laurila E, Houstis N, Daly MJ, Patterson N, Mesirov JP, Golub TR, Tamayo P, Spiegelman B, Lander ES, Hirschhorn JN, Altshuler D, Groop LC: PGC-1alpha-responsive genes involved in oxidative phosphorylation are coordinately downregulated in human diabetes. Nat Genet 2003, 34: 267–273. 10.1038/ng1180

Tian L, Greenberg SA, Kong SW, Altschuler J, Kohane IS, Park PJ: Discovering statistically significant pathways in expression profiling studies. PNAS 2005, 102: 13544–13549. 10.1073/pnas.0506577102

Simon R, Lam AP: BRB Array-Tools User's Guide, Version 3.3: (National Cancer Institute Biometric Research Branch, Bethesda, MD) Technical Report. 2005., 28:

Delongchamp RR, Velasco C, Dial S, Harris AJ: Genome-wide estimation of gender differences in the gene expression of human livers: statistical design and analysis. BMC Bioinformatics 2005, 6(Suppl 2):S13. 10.1186/1471-2105-6-S2-S13

Desai VG, Moland CL, Branham WS, Delongchamp RR, Fang H, Duffy PH, Peterson CA, Beggs ML, Fuscoe JC: Changes in expression level of genes as a function of time of day in the liver of rats. Mutation Research – Fundamental & Molecular Mechanisms of Mutagenesis 2004, 549: 115–129. 10.1016/j.mrfmmm.2003.11.016

Parrish RS, Delongchamp RR: Normalization. In DNA Microarrays and Statistical Genomic Techniques: Design, Analysis, and Interpretation of Experiments. Edited by: Allison DB, Page GP, Beasley TM, Edwards JW. Boca Raton, FL: Chapman & Hall/CRC; 2005:9–28.

Morrison DF: Multivariate Statistical Methods. New York: McGraw-Hill Book Company; 1967.

O'Brien PC: Procedures for comparing samples with multiple endpoints. Biometrics 1984, 40: 1079–1087. 10.2307/2531158

Lauter J: Exact t and F tests for analyzing studies with multiple endpoints. Biometrics 1996, 52: 964–970. 10.2307/2533057

Acknowledgements

TL was supported by an Oak Ridge Institute of Science and Education (ORISE) fellowship at NCTR.

Author information

Authors and Affiliations

Corresponding author

Additional information

Authors' contributions

RD conceived of the ideas presented herein, developed the main methodology and wrote the manuscript. TL conducted the simulations. CV reviewed literatures. TL and CV have been involved in development of the algorithm and drafting the manuscript. All authors read and approved the final manuscript.

Rights and permissions

Open Access This article is published under license to BioMed Central Ltd. This is an Open Access article is distributed under the terms of the Creative Commons Attribution License ( https://creativecommons.org/licenses/by/2.0 ), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Delongchamp, R., Lee, T. & Velasco, C. A method for computing the overall statistical significance of a treatment effect among a group of genes. BMC Bioinformatics 7 (Suppl 2), S11 (2006). https://doi.org/10.1186/1471-2105-7-S2-S11

Published:

DOI: https://doi.org/10.1186/1471-2105-7-S2-S11

; estimated by

; estimated by